Business challenges

Solution Overview

Business Impact

Technology Stack

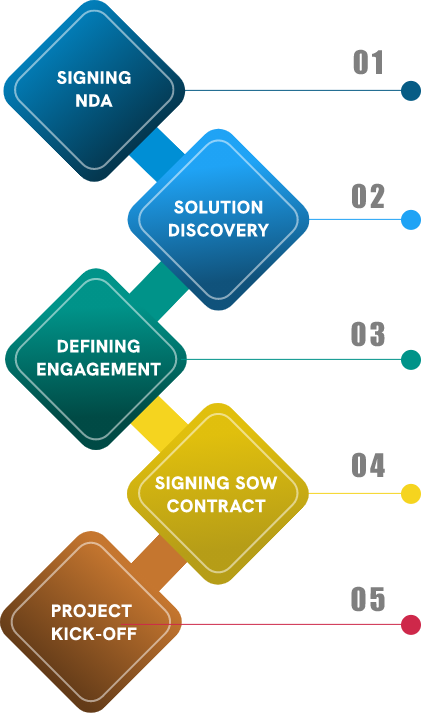

Trusted and Proven Engagement Model

- A nondisclosure agreement (NDA) is signed to not disclose any sensitive information revealed over the course of doing business together.

- Our NDA-driven process is established to keep clients’ data and IP safe and secure.

- The solution discovery phase is all about knowing your target audience, writing down requirements, and creating a full scope for the project.

- This helps clarify the goals, and limitations, and deliver quality products & services.

- Our engagement model defines the project size, project development plan, duration, concept, POC etc.

- Based on these scenarios, clients may agree to a particular engagement model (Fixed Bid, T&M, Dedicated Team).

- The SOW document shall list details on project requirements, project management tools, tech stacks, deliverables, milestones, timelines, team size, hourly/monthly rate cards, billable hours and invoice details.

- On signing the SOW, an official project kick-off meeting shall be initiated.

- Our implementation approach, ecosystem, tools, solutions modelling, sprint plan, etc. shall be discussed during this meeting.

Our Award-Winning Team

A seasoned AI & ML team of young, dynamic and curious minds recognized with global awards for making significant impact on making human lives better

Awarded Bronze Trophy at CII National competition on Digitization, Robotics & Automation (DRA) – Industry 4.0

Awarded as Winner among 1000 contestants at TechSHack Hackathon

Related Success Stories

Related Insights

Machine learning involves computers discovering how they can perform tasks without being explicitly programmed to do so…

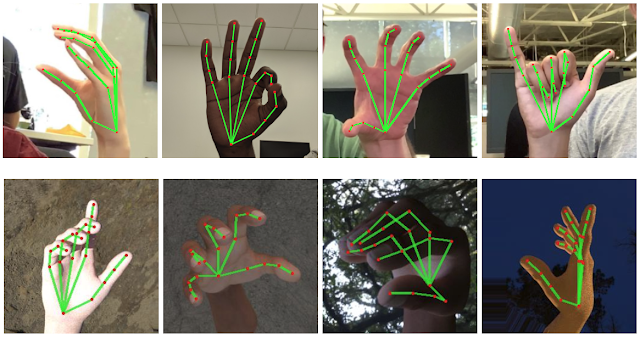

Media Pipe is a cross-platform (Android, ios,web) framework used to build Machine Learning pipelines for audio, video, time-series data etc…